Package management, dependency management, configuration management, and who knows how many other forms of management exist when it comes to computing systems. We have managers for managers for operators of applications. The roles and responsibilities of different tools can, at times, get a little blurred. I sometimes find that’s the case with Helm. Is it a configuration management tool like Chef or a package manager like apt? This even begs the question, how do configuration managers, like Puppet, and package managers, like yum, relate to each other and what does any of this mean for Helm and Kubernetes?

To understand Helm ends helps to understand where other tools begin and the interfaces they have with Helm or Helm has with them.

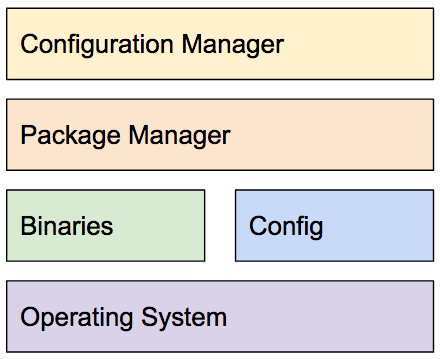

Parts Of The Management Stack

Before we look at Helm, specifically, let’s take a look at different parts of a managed stack. This stack is based on a generality of how existing systems work.

Conceptual stack elements

The components:

- Operating Systsm: GNU Linux, Windows, Mesos, and even Kubernetes are examples of this. The operating system, in a general sense, is where applications run. Mesos and Kubernetes are more an example of the data center or cluster as a computer than a single hardware system.

- Binaries: The applications themselves.

- Config: Applications often come with configuration. For example, when MySQL is installed there is configuration like the

my.conffile. - Package Manager: A package manager deals with an individual package. For example, it might deal with installing MySQL. Consider a typical MySQL installataion example that places the binaries and configuration in the right place while obtaining the default root password to use and configuring MySQL to use it.

- Configuration Manager: Is the the application running in production, testing, or someplace else? What specific configuration should be applied to an application running in a particular location? What user should it be running as and what permissions should that system user have? Configuration management sits at a level above the other parts and looks at how the parts work together for specific application instances.

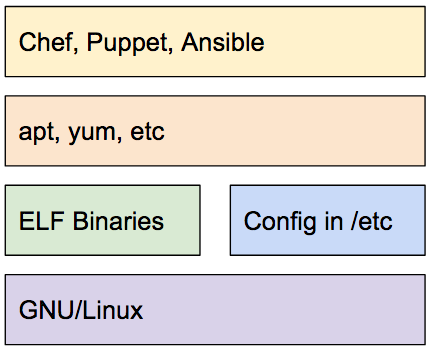

GNU/Linux

To illustrate how these parts work together in practice let’s take a look at a common GNU/Linux situation.

GNU/Linux management stack for applications

Here we have replaced the conceptual components with examples in the ecosystem. Specific package managers – apt and yum – alond with specific configuration managers – Chef, Puppet, and Ansible – are displayed.

To revisit the MySQL example in this kind of setup:

- The ELF Binaries are the ones compiled for Linux on the particular architecture.

- The

my.conffile goes along with the binary. - Package managers, like apt and yum, can be used to install MySQL on the system.

- Chef, Puppet, and Ansible can be used to manage MySQL on systems at a higher level and for specific applications.

This can also be seen for custom packages. It’s not unusual for a company to create their own packages (e.g., debian packages to use with apt and deploy onto Ubuntu). They can then manage those packages with a tool like Chef.

Consider the installation of WordPress, the perennial example. WordPress needs a database. Would a WordPress playbook for Ansible fetch a MySQL binary or use the systems package manager to install MySQL? It would typically use the package manager to install it, if you were wondering.

This stack, shown simply, is rather well known and works in both the push and pull styles for updates and changes.

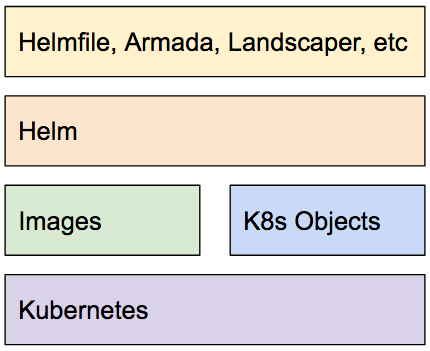

Kubernetes, Helm, And Others

How would this look if you replaced GNU/Linux with a Kubernetes stack?

Kubernetes management stack for applications

For comparisons with the GNU/Linux picture:

- Kubernetes, at the bottom, is equivalent to the operating system and sits where GNU/Linux does. The model is a little different because Kubernetes is used in a data center as a computer situation rather than a single system.

- Above Kubernetes you have container images and Kubernetes objects, including Secrets and ConfigMaps with customized settings for the running application instance.

- Over the Kubernetes objects is Helm, a package manager in the same vein as apt, that handles putting things in the right place for the running application.

- The same way Chef, Puppet, and Ansible are used for managing that higher level application’s we have projects like helmfile, armada, landscaper, and others.

But, Do I Need A Package Manager?

If you are a Kubernetes expert you might be wondering, why do I need a package manager like Helm? Or maybe you’re bolder and think, with containers and Kubernetes manifests there’s no need for a package manager. That you can use GitOps and Kubernetes configuration files without a need for anything else. Maybe you even want Helm to move into that space and abandon package management.

There are a couple things to consider:

- Management of distributed application specific operational expertise. People who want to operate something, for example WordPress, may want to rely on experts in MySQL to specify how to stand that up and operate it. Where do they get that expertise as code and how do they keep up with it? Traditionally, that’s been with package management dependencies. You can depend on a package from someone with expertise so you don’t need to have it and can focus on your specific business needs.

- Reusable organization packages. It’s not unusual for a company to create packages for their custom application (e.g., debian packages) and then use a configuration manager (e.g., Chef) to install and manage those custom packages. Custom packages, that the operational experts in a company put together, that can be run locally, in testing, and production in varying reusable circumstances. This happened before containers and happens today with containers.

- Application specific configuration. Operating applications is about the application and applications have varying needs that can be configurable. Do you need to collect a root password, for example the way MySQL does? That’s specific to that application and those like it. That same thing does not apply to installing something like

wget. How is that information captured and used with a good experience? This is a place package manager work.

Much of this comes down to shared reusable expertise targeted around applications. If you are an expert in operating an application you may not need need a package manager to install that application for you. If you are an expert in an operating system itself you may know the right place to put startup files, where all config should go, what the config should look like, and want to do it yourself. If you are a typical person operating an application you’re concerned with your application not the platform it’s running in or the dependencies of your application.

Another way of looking at it, when someone uses kubectl in create and install an application it’s similar to someone who downloads a binary of an application and runs it. It’s a different use case than package management.

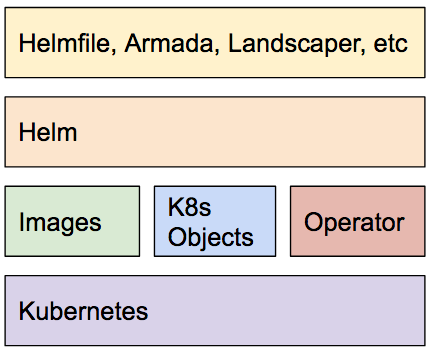

What About Operators?

If you’re working where Kubernetes operators sit or if those negate the need for Helm consider the following diagram.

Kubernetes management stack for applications with an operator

From the operator documentation by CoreOS (now part of Red Hat),

An Operator is an application-specific controller that extends the Kubernetes API to create, configure and manage instances of complex stateful applications on behalf of a Kubernetes user. It builds upon the basic Kubernetes resource and controller concepts, but also includes domain or application-specific knowledge to automate common tasks better managed by computers.

Operators are about applications as they are running. It’s not about the install, sharing, or re-use experience. It’s application specific operation. Operators can be installed as part of an application by Helm. They complement Helm and the other tools.

To continue the previous analogy, some packages (e.g., databases) have shipped with extra scripts to manage their life cycle. Operators fit into a similar space.