Reviewing charts, the packages for Kuberentes Helm, is an often manual process. This has been especially true for the community managed charts that operate in a similar model to Debian and Ubuntu packages. When automation was used it was behind gates that required human interaction. This produced a slow feedback process for chart developers. Until now. In the past couple weeks continuous automation has been introduced utilizing CircleCI. Let’s take a look at what’s happening.

Leveraging A SaaS

The gated testing of charts in the community managed repository required a gate. To trigger it a member of the Kubernetes organization commented with /ok-to-test. This caused the chart to be started in a running Kubernetes cluster. To make sure something nefarious wasn’t run in the cluster a human in the loop was implemented.

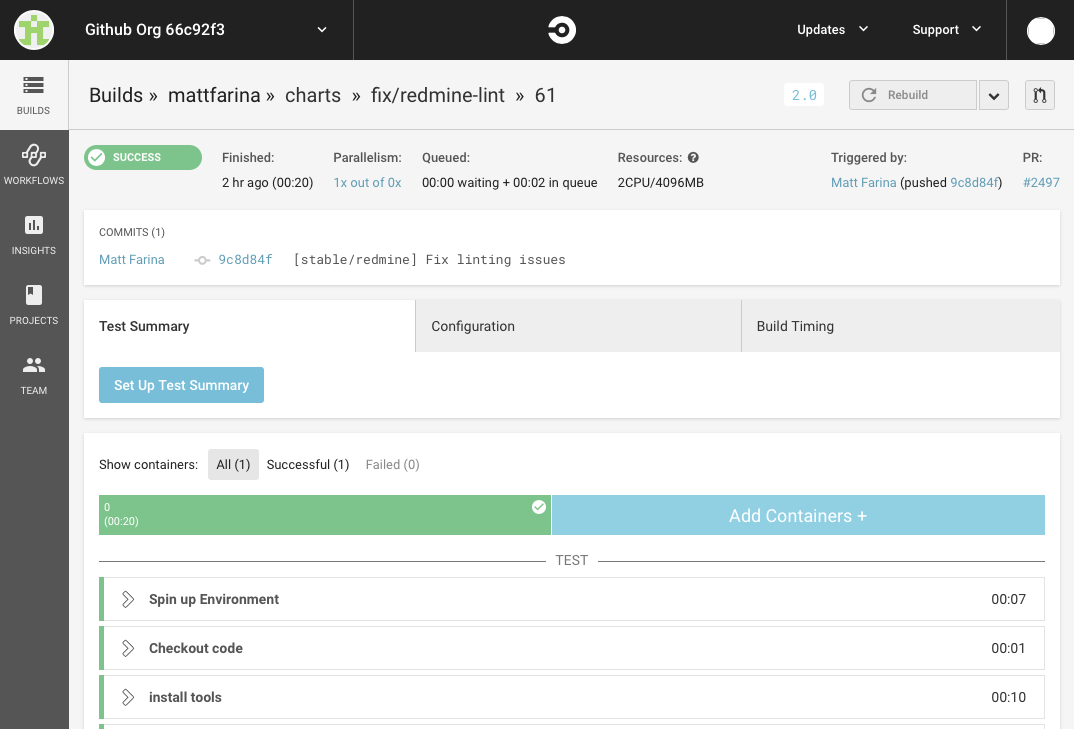

But, a lot of testing or linting could happen without running the chart. helm lint, YAML linting, and other static checks can be easily run in a continuous manner. Enter CirceCI.

If you’re a fan of other services, like Travis CI, know that we are, too. In fact, the Kubernetes project uses varying services. To spread the load we chose CircleCI in this case.

Helm Lint

When a pull request is submitted the very first check is to run helm lint on each Chart. The testing is smart enough to compare the pull request to the master branch and detect the changes introduced by this pull request. It then looks at each chart individually.

YAML Lint

There’s a difference between a valid YAML file and one that follows best practices. For example, there shouldn’t be extra spaces at the end of a line and indentation should be consistent throughout the file (e.g., no mixing of 2 space indentation with 4 space indentation).

To automate these checks a YAML linter is being used on the Chart.yaml and values.yaml files.

The template files are not being checked, just yet, but I am looking at adding them. The template files themselves are not valid YAML files while being valid templates. They may not pass linting themselves. But, the YAML files generated from the templates can be linted. Since they are different from the templates it’s important to find a way to provide useful feedback to chart authors in an automated way prior to implementing this form of checking.

Version Increments

Any charts updated in the community repositories need to have their semantic version incremented. The CI system now checks that the version is valid and incremented. No more relying on a person to notice.

NOTES.txt

The NOTES.txt file is an optional template for charts. When Helm installs a chart it will render the template to the output. For charts in the community repositories they are required. CI now checks for them and provides direction if they are missing.

More to come

These CI checks are just the beginning. Automating reviews will speed up the time for pull requests being merged, increase the quality of contributions, and hopefully help keep reviewers engaged. But, they are just the beginning. There is more feedback that can be automated and presented to chart authors. Look for more to come.